now if you try to plot it in 1D you will end up with the whole line "filled" with your hyperplane, because no matter where you place a line in 3D, projection of the 2D plane on this line will fill it up! The only other possibility is that the line is perpendicular and then projection is a single point the same applies here - if you try to project 49 dimensional hyperplane onto 3D you will end up with the whole screen "black"). Exactly no pixel would be left "outside" (think about it in this terms - if you have 3D space and hyperplane inside, this will be 2D plane. However this only shows points projetions and their classification - you cannot see the hyperplane, because it is highly dimensional object (in your case 49 dimensional) and in such projection this hyperplane would be. Pred <- predict(svm, )įor more examples you can refer to project website # We can pass either formula or explicitly X and Y # We will perform basic classification on breast cancer dataset Simply train svm and plot it forcing "pca" visualization, like here. If you are not familiar with underlying linear algebra you can simply use gmum.r package which does this for you. You can obviously take a look at some slices (select 3 features, or main components of PCA projections). With 50 features you are left with statistical analysis, no actual visual inspection. In order to plot 2D/3D decision boundary your data has to be 2D/3D (2 or 3 features, which is exactly what is happening in the link provided - they only have 3 features so they can plot all of them). 4.2: Hyperplanes Last updated 4.1: Addition and Scalar Multiplication in R 4.3: Directions and Magnitudes David Cherney, Tom Denton, & Andrew Waldron University of California, Davis Vectors in Math Processing Error can be hard to visualize.

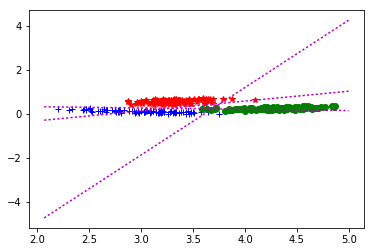

After fitting the data using the gridSearchCV I get a classification score of about. The only thing you can do are some rough approximations, reductions and projections, but none of these can actually represent what is happening inside. Modified 5 years, 11 months ago Viewed 689 times 5 I'm currently using svc to separate two classes of data (the features below are named data and the labels are condition). Z = lambda x,y: (-clf.intercept_ef_*x ef_*y) / clf.coef_Īx = fig.add_subplot(111, projection='3d')Īx.plot3D(X, X, X,'ob')Īx.The short answer is: you cannot. # The equation of the separating plane is given by all x so that np.dot(svc.coef_, x) + b = 0. Then I tried to plot as suggested on the Scikit-learn website: get the separating hyperplane w clf.coef 0 a -w 0 / w 1 xx np.linspace (-5, 5) yy a xx - (clf.intercept 0) / w 1 plot the parallels to the separating hyperplane that pass through the support vectors b clf.supportvectors 0 yydown a xx + (b 1. X = iris.data # we only take the first three features. Plot_contours(ax, clf, xx, yy, cmap=plt.cm.coolwarm, alpha=0.8)Īx.scatter(X0, X1, c=y, cmap=plt.cm.coolwarm, s=20, edgecolors='k')Ĭase 2: 3D plot for 3 features and using the iris dataset from sklearn.svm import SVC Title = ('Decision surface of linear SVC ') Xx, yy = np.meshgrid(np.arange(x_min, x_max, h), np.arange(y_min, y_max, h))ĭef plot_contours(ax, clf, xx, yy, **params): X = iris.data # we only take the first two features. Case 1: 2D plot for 2 features and using the iris dataset from sklearn.svm import SVC I have also written an article about this here: However, you can use 2 features and plot nice decision surfaces as follows. This is because the dimensions will be too many and there is no way to visualize an N-dimensional surface. You cannot visualize the decision surface for a lot of features. In this paper we discuss advantages of network-enabled keyword extraction from texts in under-resourced languages.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed